Assistive Listening Dials In

A daily selection of the top stories for AV integrators, resellers and consultants. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

With smartphones capable of communicating with just about everything, it’s no surprise that technology developers are applying them in the realm of assistive listening. Not only does this setup eliminate much of the hassle associated with dedicated devices, it also enables facilities to reach a wider audience.

“The solutions on the market with dedicated receiver devices that run on radio frequencies or even infrared technology require lots of maintenance: you have to collect these devices after the event; you have to clean them; you have to make sure they’re loaded; and if you do it properly, you have to replace the cushions on the ear pads. There’s a lot of maintenance, and if you want to rent them for a single event, it’s expensive,” said Reto Brader, CEO at Barix, developer of IP-based audio communications solutions. “With this high-end computer that is in everybody’s pocket these days, of course we’re using the mobile device as an assistive listening receiver and then feeding it using standard technology––Bluetooth or Wi-Fi.”

Recently, SCN explored some of the latest solutions in the marketplace. Here is what we found.

Barix

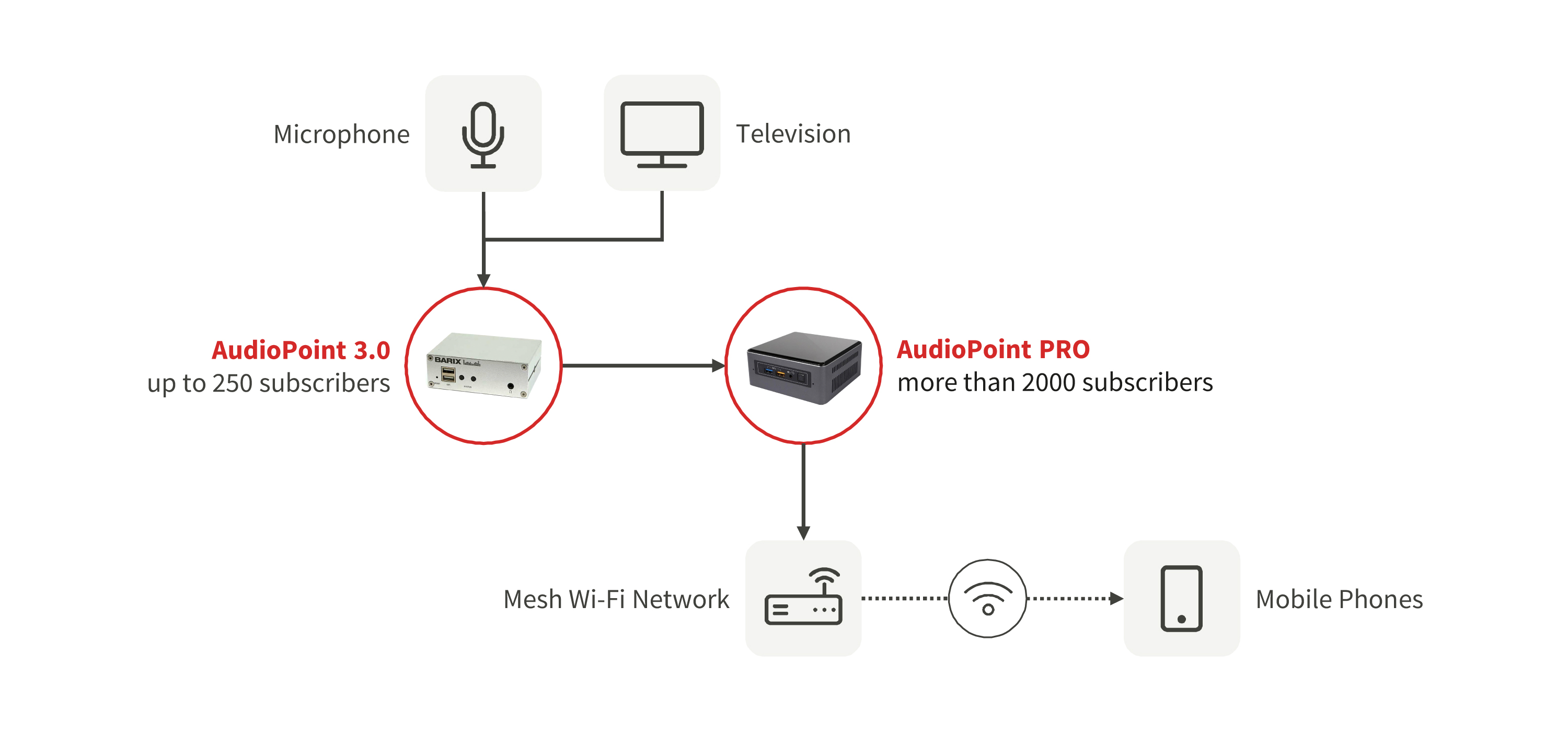

Barix’s AudioPoint 3.0 audio-to-mobile platform leverages IP audio and a Wi-Fi network for live presentations and digital signage applications. A unicast stream enables near-lip sync audio streaming to a user’s mobile phone via a free iOS and Android app. The encoder hardware in AudioPoint 3.0 will support up to 250 listeners (depending on the software).

“Our second-generation system worked on multicast, and many networks limit the amount of traffic [available] for multicast,” Brader explained. “Our version 3.0 is based on unicast: there is a point-to-point connection between the encoder and the users.”

This configuration requires integrators to ensure that the Wi-Fi network supports low-latency links: “That’s being done by having it support the QoS settings––there is a flag that gives you priorities on the packets. Professional Wi-Fi encoders have the capability to set this, and our data sheet [specifies] which [packets] we have flagged.”

Barix’s AudioPoint Pro server distributes the output from AudioPoint 3.0 to over 2,000 concurrent listeners via multicast over professionally designed mesh Wi-Fi networks with QoS (Quality of Service) management and the necessary network bandwidth.

A daily selection of the top stories for AV integrators, resellers and consultants. Sign up below.

Brader noted that as the listener count increases, so, too, does the work involved in configuring the Wi-Fi network. “There are a few parameters that need to be set. Many solutions are good for 12 or 15 listeners, so it’s perfect for a few people in a museum or even at home, but when you want to go beyond that number, you have to take care of the network.”

Listen Technologies

Assistive listening products manufacturer Listen Technologies offers Listen Everywhere, a Wi-Fi-based system designed to support thousands of listeners and over 50 channels. Listeners receive audio on their smartphones and tablets through a free app in facilities that are equipped with Listen Everywhere hardware.

Facilities may customize the app to incorporate their own branding; they may also upload advertising, coupons, and information about promotions, as well as links to meeting agendas, class notes, and menus. Listen Everywhere is configured for use in indoor arenas and airports, and with other ADA (Americans with Disabilities Act) assistive listening systems such as ListenRF, ListenIR, or ListenLOOP.

“Listen Everywhere allows users to engage with their personal devices as they already do every day, but now they actually have that direct hearing link to that personal device,” said Doug Taylor, chief product officer at Listen Technologies.

Williams AV

Williams AV’s WaveCAST Dante assistive listening system delivers wireless audio to personal devices via a free app. Rob Sheeley, president and CEO of the company, said that one of the things that makes this solution unique is that it incorporates a professional audio DSP. “It’s got real professional audio inputs and outputs; it’s got the ability to be optimized for speech intelligibility, music, or for assistive listening. You can tweak it to match the facility and the environment,” he said. Up to four WaveCAST systems can be configured on the same Wi-Fi network, delivering audio to up to 45 listeners via unicast or 200 in multicast mode. WaveCAST Dante is based on Audinate’s Dante Ultimo UXT chipset that supports AES67 and SMPTE 2110. It can be controlled by Audinate’s Dante Controller for setup and management.

Williams AV also offers the FM+ Dante, which incorporates WaveCAST server technology into the manufacturer’s FM assistive listening system to deliver both FM and Wi-Fi audio, depending on the listener’s preference. Sheeley explained that this solution accommodates users who prefer the simplicity of an FM system, as well as those who desire the discretion of receiving assistive listening audio through their personal mobile devices. “It’s less intrusive, and they can set it up [discreetly] and use their own headphones or earbuds, and not have to worry about people seeing them using an assistive listening device,” he said.

The FM+ also provides facilities with increased flexibility, since they no longer have to choose between providing Wi-Fi or FM-based systems; with this solution, they can provide both. As its name suggests, the FM+ Dante features a Dante audio input.

Like Brader, Sheeley touted the flexibility of Wi-Fi assistive listening solutions; however, he urges AV integrators to pay attention to how the system is configured to ensure they are achieving optimum performance. “Know your Wi-Fi routers: different routers have different capabilities,” he said. “Make sure you select and [configure] a Wi-Fi router system that’s capable of supporting the amount of calls that you expect to handle with this.”

And once again, the more listeners there are, the more complex Wi-Fi network configuration becomes. “With our Wi-Fi system, we can handle 45 unicast receivers at one time, and when we go multicast, we can go up to 1,500,” Sheeley explained. “Multicast will always be a more robust way to deliver audio to multiple users, but it requires a little bit more work on the IT side to make sure that all of the equipment is multicast-enabled.”

Speech Easy

Broadcast solutions developer ENCO offers enCaption4, a real-time, automated speech-to-text engine designed for captioning live presentations.

Available as an on-premise or cloud-based solution, it will process speech through a single microphone for a single location, or distribute captions across the enterprise from a cloud server. enCaption4 features NDI compatibility for video production and streaming applications; with this solution, users in these environments no longer need to employ specialized encoding hardware for captioning.

Carolyn Heinze has covered everything from AV/IT and business to cowboys and cowgirls ... and the horses they love. She was the Paris contributing editor for the pan-European site Running in Heels, providing news and views on fashion, culture, and the arts for her column, “France in Your Pants.” She has also contributed critiques of foreign cinema and French politics for the politico-literary site, The New Vulgate.