Cloud Power: The Evolution of Virtualized Production

Welcome to the world of software-defined workflows. Here's why that's a good thing.

With so many terms floating around the media and entertainment tech space, it helps to agree on definitions. When I think of an on-prem, software-defined infrastructure, one example that comes to mind is the NewTek (now Vizrt) TriCaster. People tend not to think of it as a software-defined product because it’s sold as an appliance. But TriCaster is intrinsically a Windows computer with video and audio I/O. With its processing power, it is a software-defined engine.

[Cloud Power: Pricing Modern Infrastructures]

If you extend that thinking into how we work today, almost everything is plugged into an IP data network. And NDI becomes yet another extension of a software-defined workflow.

Previously, a lack of compute power made software-defined workflows at high production values impossible. Only with dedicated hardware could we provide high quality sophisticated audio and video processing. This is most obvious in equipment like video switchers and audio consoles, which perform large amounts of computationally complex actions in the time of a single frame of video or just a few audio samples.

What we see now is general purpose computing, especially with the addition of GPU-based computing that removes the need for dedicated, specialized processing hardware. Because of the increased speed of general purpose computing, we don’t need a dedicated FPGA to build fast video switchers or dedicated audio DSP chips. This has allowed us to move quickly toward software-defined infrastructures.

It's About the Infrastructure

When we talk about virtual production, I’m not referring to virtual reality, extended reality, or virtual sets. I’m speaking about virtualized production infrastructure, which is, by definition, software-defined because it’s not confined to one piece of physical equipment.

The epitome of virtualization is every hyperscaler running applications on virtual computing machines. Those applications are not running on your laptop or local machine. They’re running in the cloud and use your machine as a display and I/O terminal. Virtualized production infrastructure typically means you’re not on premise with dedicated hardware, but taking an application and running it in containers such as Docker in a multitasking way that enables talent to operate from wherever they are.

A daily selection of the top stories for AV integrators, resellers and consultants. Sign up below.

In a private cloud setup, virtualized systems run in the same way as the public cloud, but computing power lives on premise. In a public cloud scenario, infrastructure is rented from a hyperscaler. The server does not live in your facility.

Virtualized infrastructure means there is not a dedicated box running particular software—and within a software-defined infrastructure, you may or may not have that software on premise. It could be running in a cluster of servers under a hypervisor. (A hypervisor is software that can run multiple virtual machines on a single, physical machine, and allocates computing resources such as CPU and memory to individual machines as required.)

[Cloud-Based Production: Opportunity or Threat for Integrators?]

Software-defined is a precursor to virtualized infrastructure. You can have software-defined infrastructure that lives in your premise, but you can’t have virtualized infrastructure that is not software-defined.

TriCaster is also a great example of software-defined infrastructure. As we transition to virtualized infrastructures, most on-premise facilities will look more cloud-based systems. Stacks of servers will run on a high-speed network, with a hypervisor time slicing those machines and configuring them as required.

Benefits and Advantages

The pace of change will be rapid. The only hardware required that’s not COTS in a software-defined environment are control surfaces—either physical or virtual.

If I have a computer fast enough to process 256 channels of HD video, that hardware can run any software application I choose. For example, if I’m running Vizrt’s Viz Vectar Plus, but Grass Valley comes out with a switcher that I’d prefer for a particular job, I can run that new Grass Valley switcher on the same server in place of Vectar.

That’s where software-defined infrastructure is a huge advantage for clients. We can redefine workflows and tools without having to change (or, at most, minimally change) any physical infrastructure. Previously, if I’d bought a physical Sony switcher and my TD changed his mind and now wanted the features of a Grass Valley switcher, I’d literally have to rip out the Sony switcher, rewire my facility, and replace the switcher.

[Vizrt Brings Secure Sports Analysis to the Cloud—Here's How]

A software-defined infrastructure gives clients the freedom to experiment. You could potentially run short engagements of different products within a software-based system. Whether the computing is done on premise or in the cloud becomes less defined and more agile. Users get to choose whether on-premise, cloud, or hybrid is the best approach to run any given part of their infrastructure.

Plus, software-defined infrastructures provide the ability to break down the barriers of what is on premise, what is remote, and what is in cloud. And it holds the promise of operating in ways that will be more cost effective and enable creatives to work in new ways.

Smooth Transitions

As integrators, the first thing we must do is get comfortable with IP transport workflows, be it SMPTE ST 2110, NDI, Dante AV, or whatever is appropriate in your client’s applications. You must be very comfortable in that kind of signal transport, or you won’t be able to deploy any of it.

Looking further, we need to understand computing technology beyond the desktop and beyond the server. Start to build your learning around cluster computing, how resources can be shared to perform many actions simultaneously. These are the next steps that we all must take to move forward with the industry.

We’re already seeing software-defined infrastructure within existing physical signal flows. It’s happening with software-defined audio, as many higher-end broadcast mixing consoles are software-defined devices. SSL’s System T broadcast audio platform is a great example of virtualized audio infrastructure—a dedicated computer running on Intel processors with a control surface over a very complex software device.

Now, we’re starting to see that transition on the video side. It always happens first in audio because the bandwidth of audio data is less than video. The next in the chain to transition will be video switchers and video effects. Gallery’s Sienna is a virtualized processor audio and video signals that can be used as a router, video switcher, frame sync, or audio cue. And it has more than 250 processing modules.

[Editorial: But Did You Ask Me If I Like KISS?]

There are many more products with a similar virtualized design on the way from various manufacturers. If you can do your work using generalized hardware without changing the rest of the workflow, your box in the chain just got a lot more flexible and a lot less expensive. When you begin to take note of the underlying technology behind these developments, you’ll start to see why they’re beneficial for the industry and why they’re growing so rapidly.

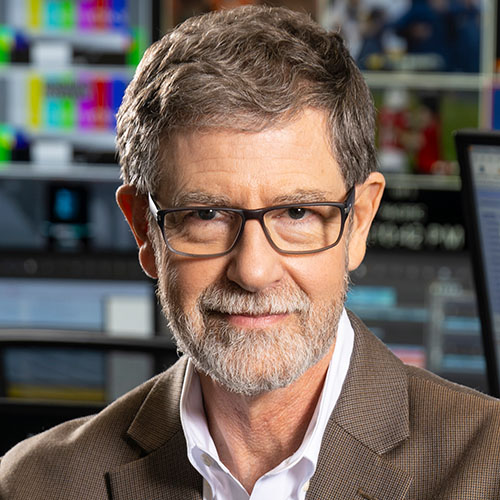

Dave Van Hoy is the president of Advanced Systems Group, and was named to the SCN Hall of Fame in 2026.