Master Class: Sound Reinforcement Techniques for Live AV

WHAT EVERY TECH MANAGER NEEDS TO KNOW ABOUT LIVE SOUND SUPPORT

The concept of a sound reinforcement system is simple. It begins with a sound source—something that you want to make louder. It could be a human voice, musical instrument, CD/MP3 player, pre-recorded audio track or message, or any number of source devices. When there is more than one source, a mixer is used to balance their levels and combine them into a signal that is passed on to the audio power amplifier. The amplifier increases the signal level that is sent to the loudspeaker, which provides the sound in the listening environment in which it is to be heard. Pretty simple?

It is, until you consider the effect of acoustics, which is the study of waves moving in a medium. In the world of sound, we’re mostly concerned with how sound waves composed of air molecules compress and “de-compress” in the atmosphere of our listening environment. We often think of acoustics as only impacting the room (or space) in which the sound system is heard. In fact, acoustics affects the sound quality at both ends of our simplified signal chain.

In order to be amplified, any sound must first be converted into an electrical signal. For acoustic sources, like a human voice or a musical instrument, it’s the microphone’s job to convert acoustic energy into electrical energy.

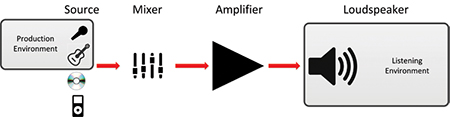

Simplified schematic of a sound system Once the sound is represented as an electrical signal, it can be mixed, processed (and/or stored along the way), and amplified before it’s finally sent to the loudspeaker, where it is converted back into acoustic energy. Professional sound system designers carefully select the most appropriate components given the specific requirements and budget of the application. There are two “wild cards”, however, which are often underestimated or even completely overlooked: the room (or space) in which the sound is produced, and the room (or space) in which it is ultimately listened to. Yet these acoustical environments have a significant impact on the sound quality heard by the listeners. It would be ideal if we could control the acoustics of these environments, but with live AV events, it’s not always possible. Events happen with levels of planning that spans the spectrum, from impromptu executive briefings to large-scale presentations planned months in advance. Even with the best technology and equipment at your disposal, the quality of sound is often at the mercy of what happens to it in these two spaces.

PRODUCTION ENVIRONMENT

The sound production environment refers to the space or room where the acoustical sound is actually produced. For live AV events, common production spaces include conference rooms, meeting rooms, lecture halls, performance spaces and outdoor environments. At this end of the signal chain, it’s all about the microphone and its interaction with the space.

A daily selection of features, industry news, and analysis for tech managers. Sign up below.

Sound system performance is affected by the acoustical environments in which sound is both created and played back. Unlike the human ear-brain system, a microphone can’t determine which sounds are important and which are “noise.” When a microphone is used to pick up the sound produced by a talker or musical instrument, it can’t avoid picking up some the sound of the space at the same time. In an outdoor environment, this is usually limited to ambient sounds, such as wind, birds, traffic, airplanes—unwanted sounds that are produced by other sound sources.

Indoors, the sound of the space is created by the interaction of the sound source, the sound system, and the room acoustics. Even a single human voice speaking into a microphone can produce reflections from hard surfaces in the room (for example, the top of a lectern, a conference table, walls, ceilings, floors), and these reflections are also picked up by the microphone.

- While the “sound of the space” adds characteristics which identify the physical location and conditions of the event, it can also significantly affect the quality of the communication by degrading speech intelligibility and clarity.

In both outdoor and indoor environments, there are steps that can be taken to minimize these effects. The first general rule is to put the microphone as close to the source as practical. This minimizes the pick-up of unwanted sound (like acoustical reflections or background noise) that can degrade the quality and intelligibility of the sound you want to amplify. (Note the word “practical”; it’s not always preferable to put the microphone as close as possible to the source. Some types of microphones may produce excessive “boominess” if the speaker’s mouth is too close—this is called “proximity effect” in dynamic-type microphones.)

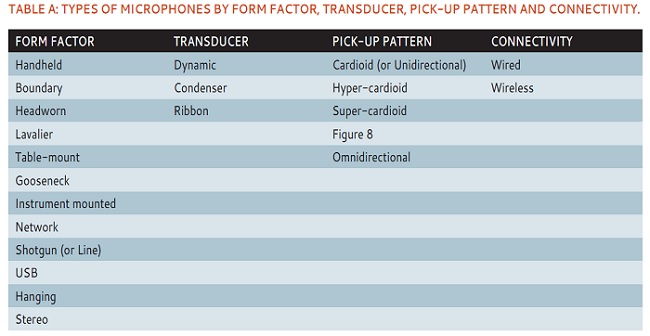

For any given application, there are specific types of microphones which will work best. Microphones are described by their form factor, transducer type, pick-up pattern, and connectivity. While a comprehensive guide to selecting the right microphone for every application you may encounter would be beyond the scope of this article, Table A provides a listing of the types that are available and some of the decision factors to consider when making a selection.

Line array columns are useful in large highly reverberant spaces like houses of worship because of their highly controlled directivity (coverage) pattern. In this image of St Mirin’s, the form factor of the Entasys speakers by Community Professional blends in seamlessly—almost invisibly—with the interior decor. Microphones with directional characteristics minimize the pick-up of unwanted or extraneous sound. These include all of the listed pick-up patterns except for omnidirectional. In live events, cardioid-type microphones will also minimize the level of sound fed back into the sound system from nearby (or mis-aimed) loudspeakers— reducing the incidence of the dreaded feedback.

LISTENING ENVIRONMENT

The listening environment is the space in which the loudspeakers project sound. Because the acoustics of the space can have such a profound effect on the quality of sound heard, sound professionals always consider the listening environment as part of the “system.”

Just like the microphone-room interaction, the interaction of the loudspeaker and room is affected (and can be managed) by the type and selection of equipment. The oft-quoted objective is to “put the sound where you want it, and not where you don’t”. As obvious as it sounds, it’s not so easy to achieve in practice because not all loudspeakers control sound equally. But the basic rule of thumb is the same as for microphone use—put the loudspeaker (or loudspeakers) as close to the listeners as practical. Indoor room reflections caused by hard surfaces will combine with the sound from the loudspeakers to degrade the intelligibility of the sound hear by the listeners. Even in outdoor applications, distant listeners may hear more ambient noise than the desired sound from the loudspeakers.

In highly reverberant indoor spaces, loudspeakers with controlled directivity are likely to do a better job of directing sound to the listeners and not to reflective surfaces such as hard walls, ceilings, and the floor. Line array systems have become an extremely popular choice for larger, highly reverberant venues because they offer well-controlled dispersion in the vertical plane. Line arrays are a densely packed cluster of identical loudspeakers, oriented in a line. For large spaces, they’re usually suspended on either side of a stage area. Smaller versions of line arrays are built into single column-type enclosures for use in smaller spaces. Although the proper implementation of a line array requires significant expertise, this dispersion characteristic is helpful because less sound is reflected from ceilings and floors.

Wireless microphone systems are becoming increasingly popular for live events and conferences. Featured (below right) is the Revolabs Executive HD series. Conversely, Rusty Wait, VP of sales with Eastern Acoustic Works, stated, “We’re actually having a lot of success with our point source QX boxes in houses of worship after convincing people that they don’t actually need a line array for their rooms.”

A distributed sound system is another common method of getting the sound closer to listeners. A distributed loudspeaker system consists of multiple similar (or identical) loudspeakers spread throughout the listening area. For extremely large spaces where the audio is linked to a visual presentation, it’s important to be able to control the level of each individual loudspeaker (or at least subgroups of them), and to be able to delay the signal to the most distant ones to minimize distracting sight-to-sound synchronization issues.

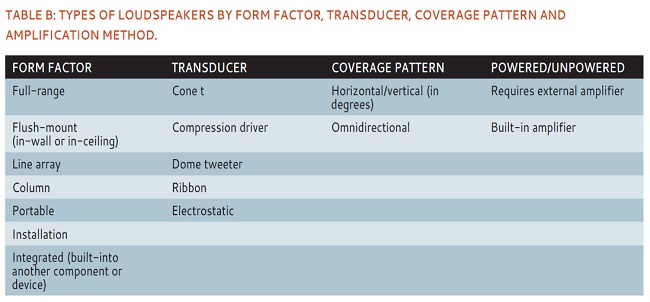

Loudspeakers, like microphones, come in a wide variety of types and form factors. Their selection is guided by the specific application and other considerations.

WHEN THEY’RE ONE AND THE SAME

So far, we’ve considered the sound production and listening environments as separate spaces. Yet in live AV, it’s more likely that it all happens in the same space, right? Even within a large space, the acoustical conditions at the microphone and in the audience area can be quite different. Consider a stage presentation (business meeting, sales presentation, panel discussion, musical event, etc.). A stage area is usually elevated above the audience area, sometimes bordered on several sides by heavy draping. Since the stage area is usually smaller than where the listeners are located, the immediate acoustic environment in the vicinity of the microphone is smaller, and therefore, different. Microphones are pointed toward the presenters and away from the audience area, so (in the case of directional microphones), the acoustical conditions in the listening space have less effect on what goes into the microphone. Similarly, loudspeakers are, by definition, intended to project sound to larger spaces. Depending on the décor and furnishings in the listening area, the acoustical environment in which the loudspeakers work can also be dramatically different than where the sound is initially produced. Achieving good sound quality for any live AV event requires more than proper sound system design and equipment selection. It’s important to consider the conditions of the space in which the sound system will operate—both where the sound goes “in,” and where it comes “out.” Simple enough?

Mark Mayfield is a long-time pro audio industry expert and a contributing editor for AV Technology.